Over the years I have training and developing Machine Learning algorithms and it didn’t take long before I noticed that I needed to find a way to take my models into production then I delved into the world of MLOps, actually I heard about it first from Pau Labarta Bajo, in this X post and it was actually through this PyData workshop were Jim Dowling, CEO of Hopsworks, talked about building production Machine Learning systems with only python using serverless service, that I got more exposed to MLOps

So, in this post I would just like to share why MLOps should be done more.

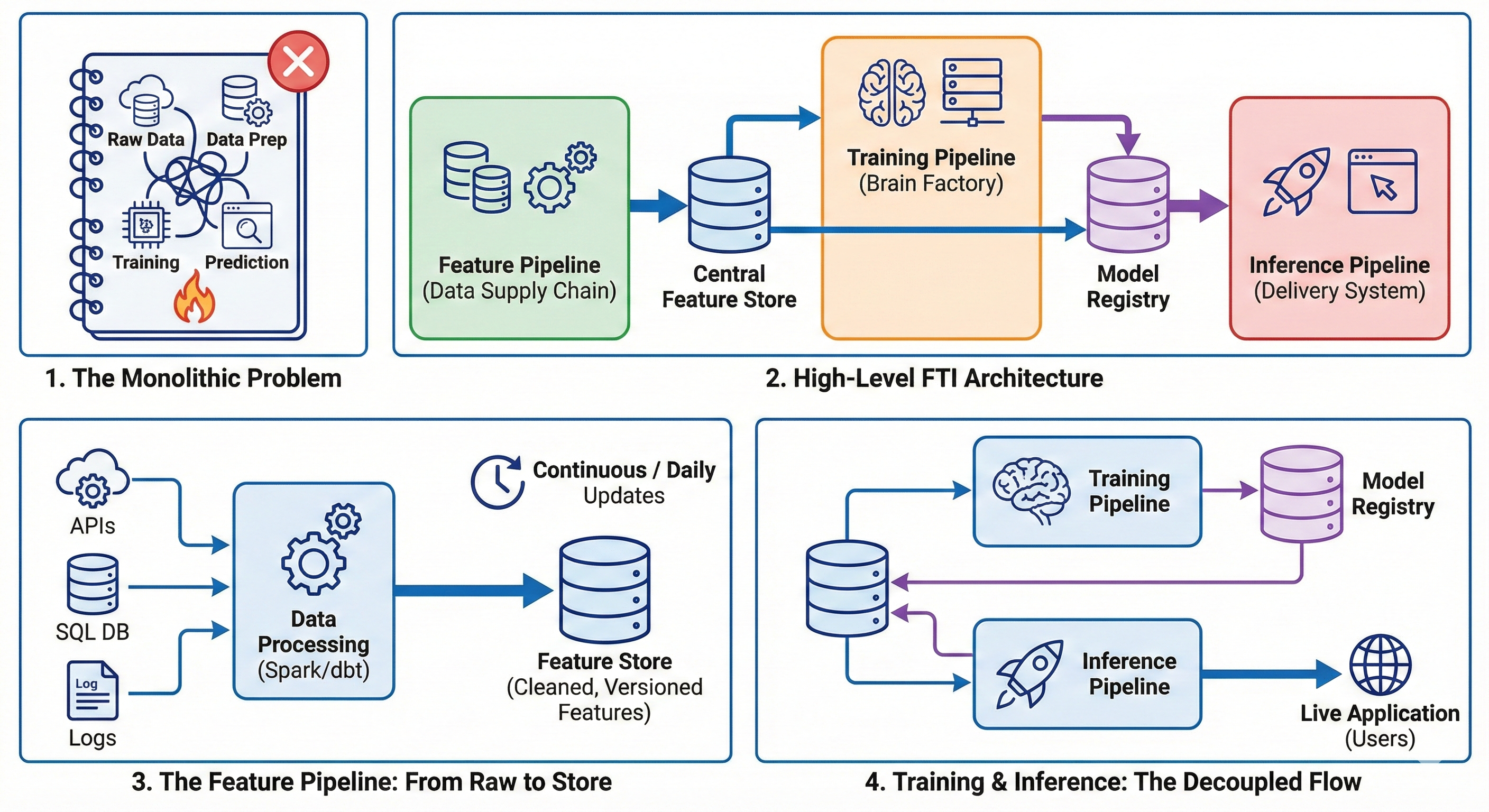

The Monolith Problem Link to heading

If your early days of machine learning were anything like mine, your workflow probably looked exactly like this: You open up a Jupyter Notebook. In the first few cells, you write some code to pull data from a CSV or an API. Next, you spend 50 cells cleaning it, dropping nulls, and doing feature engineering. Finally, you train your model, get a great accuracy score, and think, “Awesome, I am ready for production!”

But here is the harsh reality: Jupyter Notebooks are for science experiments, not software engineering.

When you try to take that massive, monolithic script and deploy it to a live server, things start breaking. This is the Monolith Problem. Early ML projects relied heavily on these manual, siloed workflows, which lack the scalability, reproducibility, and automation needed for real-world applications.

Here is why the “all-in-one” pipeline falls apart in production:

The “All or Nothing” Failure: In a monolith, if a single upstream data source changes its schema (like a frontend developer adding a new category to a dropdown list), your entire data pipeline might quietly break. If the data extraction fails, your model can’t make predictions because everything is tied together.

Resource Waste & Scaling Issues: Training a model requires expensive, high-powered compute like GPUs. Fetching and cleaning data usually just requires a basic CPU. If they are entangled in one script, you are paying for an expensive GPU to sit idle while it waits for data to download. You lose the ability to scale different parts of your system independently.

Training-Serving Skew (The Silent Killer): This is the most dangerous issue. In a monolith, features are often computed one way during the model’s training phase, but then a backend engineer has to rewrite that same feature logic for the live production API. These subtle differences mean the model that got 95% accuracy on your laptop might predict complete garbage in production, and because no actual code crashed, it goes completely unnoticed.

To fix this, we need to stop treating ML like a single massive script and start treating it like a modular, distributed system.

FTI? Link to heading

To solve the Monolith Problem, the industry had to rethink how we build ML systems. The solution is the FTI Architecture.

FTI stands for Feature, Training, and Inference.

Instead of writing one massive script that tries to do everything, the FTI pattern breaks your machine learning workflow down into three completely independent, decoupled pipelines. Think of it like the classic separation of concerns in software engineering (like splitting a web app into a Database, a Backend API, and a Frontend UI).

Here is why this separation changes everything:

Modularity: Because the pipelines are separate, they can be written in different languages, managed by different teams, and scaled on completely different hardware.

Reusability: You stop rewriting the same data-cleaning code for every new project.

Solving the Skew: This architecture completely eliminates “Training-Serving Skew” by forcing both your training model and your live production app to pull data from the exact same central source.

At the center of these three pipelines are two crucial pieces of shared storage that act as the glue: a Feature Store (for data) and a Model Registry (for the trained algorithms).

Let’s break down exactly what each of these three pipelines does.

Feature Pipeline Link to heading

The Feature Pipeline is the foundation of the FTI architecture. Its only job is to extract raw, messy data from your sources (like APIs, SQL databases, or event logs), clean it up, and engineer it into useful features.

The Output: This pipeline does not talk to a machine learning model. Instead, it outputs all that clean data into a centralized database called a Feature Store.

Why it’s separate: Raw data is constantly changing. This pipeline needs to run continuously (or on a daily schedule) to keep your data fresh, entirely independent of your modeling process. By dumping everything into a Feature Store, you guarantee that both your training environment and your live production environment are pulling the exact same, version-controlled data.

Training Pipeline Link to heading

If the Feature Pipeline is the supply chain, the Training Pipeline is the “Brain Factory.” This pipeline pulls a historical snapshot of your data from the Feature Store and uses it to teach an algorithm how to find patterns.

The Output: It produces a trained algorithm and saves it into a version-controlled Model Registry.

Why it’s separate: Training an AI model is computationally heavy and often requires expensive hardware like GPUs. You do not want to run this process every time a user interacts with your app. You only trigger the Training Pipeline when necessary, like when you have accumulated enough new data, or when your current model starts to degrade.

Inference Pipeline Link to heading

The Inference Pipeline is the delivery system. It is where your trained model actually meets the real world and starts generating value by making predictions on new, unseen data.

The Output: Live predictions served directly to your users, usually via a REST API or a web dashboard.

Why it’s separate: Inference is all about ultra-low latency and high reliability. When a user asks for a prediction, this pipeline instantly downloads the latest approved model from the Model Registry, grabs the freshest features from the Feature Store, and calculates the answer in milliseconds. Because it is completely decoupled, if your heavy Training Pipeline crashes on a Tuesday, your Inference Pipeline will happily stay online, serving predictions using Monday’s model.

Adopting the FTI architecture forces you to build modular, scalable, and resilient systems. It eliminates the headaches of training-serving skew and lets you update your data without breaking your live applications.

If you are still deploying monolithic notebooks, give the FTI pattern a try on your next project. It might just change how you build ML systems forever.